Tech

AWS and AZURE: 12 Key Differences

Are you an amateur in the world of cloud computing? In such a situation where you are so new to this, you might need to know the key differences between AWS and AZURE, which are some of the best cloud computing platforms.

If you run an organization, then you are going to need a cloud computing platform to manage the database system of your company. No matter which background you are from, cloud computing will always come in handy for you and it has become essential to thrive for many organizations.

Both AWS and Azure are popular platforms and offer almost similar features, but there are some notable differences between AWS and Azure. However, you need to choose one of them for your organization according to your requirements.

In this article, we are going to take a look at the intense competition between AWS, Azure, and their features. After reading this article, you will be able to evaluate the features of AWS and Azure for your company.

What Is AWS?

AWS services are developed in a smart and interconnected manner so they can work with each other to generate a feasible result. AWS offers three types of services, which include infrastructure, software, and platform as a service. It is among the best cloud computing platform available currently.

What Is AZURE?

Those of you who are interested in the cloud computing system would know that Microsoft AZURE was launched in 2010. With Microsoft Azure, you will see integrated cloud computing services such as computing, networking, database, storage, mobile, and web applications. Users can achieve a higher level of efficiency with this integrated cloud computing system.

Full Comparison between AWS and AZURE: 12 Key Differences

1. Compute power

AWS: If you are an AWS EC2 user, you will be able to configure out your own VMs. Users get the option to select the pre-configured machine images. Choosing AWS as your cloud computing platform will give you the ability to select size, power, memory, capacity and number of VMs.

AZURE: Select your own Virtual Hard Disk by choosing AZURE as your cloud computing system. It is similar to the machine instance for creating a VM. The user has to identify the amount of memory, and the virtual hard disk can be pre-configured by Microsoft. It can be pre-configured by a third-party or the user.

2. Storage

AWS: When an instance is initiated, temporary storage is allocated by the AWS. But this storage is terminated once the instance ends. Block storage options are available and the object storage with S3. AWS supports relational, NoSQL databases and Big Data. The block storage is the same as a virtual hard disk.

AZURE: Microsoft AZURE supports relational databases, NoSQL and Big Data through Azure Table and HDInsight. Block storage through Page Blobs for VMs and temporary storage is accessible through D drive. AZURE users further get the feature for site recovery, import, and export. Azure Backup is available for site recovery.

3. Network

AWS: Develop isolated networks within the cloud by becoming an AWS user. It offers a VPC (Virtual Private Cloud) for its users. AWS creates subnets in the virtual private cloud. Access to a private IP address, route tables, and network gateways by becoming an AWS user.

Azure: By using the cloud computing solution called Microsoft Azure, you can access to a private IP address, route tables, and subnets. Currently, both organizations are providing the ability to on-premise data centers into the cloud or firewall option.

4. Pricing Models

AWS: AWS comes with a pricing model called “pay as you go.” You have to pay AWS per hour for usage. You can buy the instances on the following three models:

On-demand: Pay as per usage without any additional cost.

Reserve: Users can reserve an instance for one to three years.

Spot: Bidding for extra capacity by the users.

AZURE:

Similar to AWS, the pricing model of AZURE is pay as you go. The only difference between both is that AZURE charges per minute instead of per hour. Due to this change in the pricing model, users can get an exact estimation of price by using AZURE.

5. Support plans

AWS: When you become an AWS user, and you will have a pricing ability that is based purely on a sliding scale tied to monthly usage. It is a risky feature to avail because your AWS bill would be very high if you are an avid user. If your usage will be for extended hours, then it is recommended to go with AZURE.

AZURE: AZURE users are given a monthly bill that is at a flat rate. If you are a heavy user of AZURE, then this service is going to be cheaper for you as compared to the services of AWS.

6. Integrations and open source

AWS: The number of open-source integrations available on the cloud computing platform of AWS is more as compared to Microsoft AZURE, due to AWS holding a good connection with the open-source community. The open-source integrations available are Jenkins and GitHub. AWS is a Linux server friendly cloud computing platform.

AZURE: AZURE provides native integration for tools like VBS, SQL database, and Active Directory. Being a user-friendly platform for .net developers, AZURE is also offering Red Hat Enterprise Linux and Apache Hadoop clusters. Are you still wondering about open source? It is no secret, Microsoft AZURE has never adopted this model.

7. Containers and orchestration support

AWS: Amazon is always getting better by making investments in new services. AWS can keep up with new demands and produce better outcomes and analytics. The features of AWS have been targeted to loT, and machine learning has been added. Depending upon the need, the users can create high-performance ad quality mobile apps.

AZURE: When it comes to keeping up with new demands, AZURE is not lagging behind. AZURE brings Hadoop support with Azure HDInsight. There is an intense competition with Amazon because AZURE runs Windows and Linux containers. On the other hand, Windows Server 2016 delivers integration with Docker.

8. Compliance

AWS: Amazon brings certifications in ITAR, DISA, HIPAA, CJIS, and FIPS as it has good connections with the government agencies. Amazon Web Services is ideal for agencies who have to handle sensitive information because only authorized persons can access the cloud. It is a must for organizations holding critical data.

AZURE: Microsoft AZURE offers more than 50 offerings: ITAR, DISA, HIPAA, CJIS, FIPS and many more. It is a government-level cloud computing service that can only be accessed by specific people. So, the security level of AZURE is almost similar to Amazon.

9. User-friendliness

AWS: When it comes to features, nothing can beat the number of features Amazon is providing. But the only issue is that AWS is not for beginners. IT experts claim that there is a learning curve with AWS. Once you learn how to use it, it will be the most powerful cloud computing system.

AZURE: Amazons AZURE is a windows based platform that is why it is easier to use for the beginners out of the box. No need to learn additional things to start using this platform. The users need to integrate on-premises Windows servers with cloud instances to create a hybrid environment.

10. Licensing

AWS: In cloud computing system with AWS, the users have to use a dedicated host for software assurance to relocate the licenses to the cloud computing system. Before migrating the licenses, the users need to ensure that the license mobility migrates the Microsoft server application products through the software Assurance program.

AZURE: the users of Microsoft Azure can avoid paying for extra licensing if they make sure whether the server fits the requirements for mobility properly. The licenses in Microsoft Azure is charged per usage and mobility is not available.

11. Hybrid cloud capabilities

AWS: AWS introduced hard disks of 100 terabytes, enabling it to be moved between the cloud and the client’s data centers. A hybrid element was needed, and hence it was added to the portfolio by making a partnership with VMware. Amazon is still developing in the hybrid cloud computing banner.

Azure: Microsoft Azure provides intense support for hybrid cloud services. The supported platforms include Azure StorSimple, Hybrid SQL Server, and Azure Stack. The pricing model for this hybrid cloud capability is paying as you go basis. The users can bring the public as you’d functionality to their on-premises data centers.

12. Deploying apps

AWS: Apps can be deployed in AWS by creating a new application and then configuring the application and environment. After that, the elastic beanstalk application can be accessed through Amazon Web Services.

Azure: For deploying apps, the Microsoft Azure portal is utilized to create an Azure app service. The developer tools are used to create the code for a starter web application.

Final Takeaway

AWS and Azure offer similar features and services to the users, but it does not mean that one service is better than the other. The decision of which service to choose only depends upon the needs of your business. No matter with service you go with, it is a given that you will be relishing the benefits of a hyper scalable cloud solution that will assist your business to accumulate.

Tech

What Is PSA Software Used for in Project Management?

In the complex world of project management, staying on top of every task, resource, and deadline is a formidable challenge. This is where Professional Services Automation (PSA) software enters the scene, offering a comprehensive solution that covers project management, time tracking, billing, and beyond.

Leveraging such a tool can significantly improve efficiency and streamline processes within any project-oriented organization. Below, we delve into the depths of PSA’s multifaceted role in project management, providing insights into why it is becoming an indispensable tool for professionals in this field.

Understanding PSA Software in the Context of Project Management

PSA software has become essential in modern project management, providing tailored solutions for professional services firms. By streamlining operations, enhancing collaboration, and providing detailed project insights, PSA tools enable managers and teams to execute projects with precision and strategic insight.

As project management demands evolve, the importance of PSA software in achieving successful outcomes becomes more evident.

PSA software, short for Professional Services Automation, is a comprehensive suite of tools designed to streamline project management for professional service providers. It integrates various functionalities such as resource allocation, time tracking, and financial management to support the entire project lifecycle from start to finish.

PSA software offers a holistic view of projects, enabling managers to make informed decisions and optimize project components for success. By centralizing communication and project data in real time, PSA minimizes the risk of errors and miscommunication.

Its goal is to simplify project management processes, allowing teams to focus on delivering quality services rather than being overwhelmed by administrative tasks. So, what is PSA software? It’s the key to efficient and effective project management for service-based businesses.

Core Functions of PSA Software: Streamlining Operations

PSA software offers a suite of essential functions for optimizing project management. Key features include project planning, time tracking, expense management, invoicing, and resource management.

Project planning and scheduling allow managers to map timelines and assign tasks accurately, setting the foundation for project success. Time tracking and expense management ensure accurate recording of work hours and project expenses, maintaining budget control and maximizing billable hours.

Invoicing and billing processes are streamlined, ensuring accurate and timely billing, accelerating cash flow, and reducing errors.

Resource management facilitates the efficient allocation of human and material resources, optimizing productivity and work quality by selecting the right team members for tasks based on availability and skillsets.

How PSA Software Enhances Collaboration and Resource Management

Effective project management relies heavily on collaboration, and Professional Services Automation (PSA) software plays a vital role in enhancing this aspect.

By centralizing information and communication on a single platform, team members can collaborate seamlessly, share updates instantly, and access project details without delays. This synergy is crucial for maintaining a cohesive workflow.

PSA software also greatly improves resource management by providing a comprehensive view of available resources, enabling managers to assign them effectively based on project requirements and individual competencies. It helps in optimizing resource utilization, ensuring a balanced workload, and maximizing productivity.

PSA software facilitates better client interaction through client portals or integration capabilities, fostering transparent communication and project tracking from the client’s perspective. This transparency builds trust and enhances client satisfaction and retention rates.

Measuring Project Success with PSA Software’s Reporting Capabilities

Monitoring the health and progress of projects is crucial, and Professional Services Automation (PSA) software excels in providing robust reporting capabilities for this purpose. These systems offer customizable reports tracking key performance indicators, financial metrics, and project statuses, essential for measuring and communicating success to stakeholders.

PSA software’s real-time data analytics empower managers to swiftly identify trends and performance across multiple projects, facilitating the evaluation of methodologies and strategies for continual improvement.

Beyond mere numbers, PSA reporting captures the project narrative, highlighting achievements, challenges, and lessons learned. It serves as a platform for celebrating milestones and guiding future initiatives.

By consolidating vast project data into accessible reports, PSA software promotes transparency and accountability throughout the organization. This fosters a culture where every team member can understand their contributions to larger projects and organizational goals.

Altogether, PSA software has become essential in modern project management, providing tailored solutions for professional services firms. By streamlining operations, enhancing collaboration, and providing detailed project insights, PSA tools enable managers and teams to execute projects with precision and strategic insight.

As project management demands evolve, the importance of PSA software in achieving successful outcomes becomes more evident.

Tech

Harness the Emotion of Color in Web Design

In the field of web design Houston-based agencies, those specializing in “web design Houston” recognize the significance of color, beyond its aesthetic appeal. By leveraging color psychology they can craft websites that evoke feelings, influence user perceptions, and establish brand identities.

For instance, a soothing blue color scheme can foster trust on a financial services site whereas a website selling kids’ toys might benefit from the vibes of yellows and oranges.

The appropriate choice of colors not only enhances the attractiveness of a website but also subtly steers users towards desired actions like subscribing to a newsletter or making a purchase. Colors play a role in solidifying an online presence.

Through the use of colors that align with the brand’s message and target audience preferences web designers, in Houston can develop websites that make an impact.

- Evolutionary Influence: Throughout history, color has carried survival significance. Reddish hues might have signaled danger (like fire), while greens indicated safe, resource-rich environments. These associations are ingrained in our subconscious and continue to trigger emotional responses.

- Psychological Impact: Colors activate different parts of the brain. Warm colors (reds, oranges) tend to be stimulating and energetic, while cool colors (blues, greens) have a calming and relaxing effect. This can influence our mood, focus, and even heart rate.

- Cultural Meanings: Colors also hold symbolic value shaped by culture and experience. For instance, red might symbolize love in some cultures and danger in others. These learned associations can influence how we perceive brands, products, and even entire websites.

- Perception of Space and Size: Colors can manipulate our perception of size and space. Lighter colors tend to make an area feel more open and airy, while darker colors can create a sense of intimacy or closeness.

Color Psychology: The Fundamentals

The field of color psychology examines how the colors we perceive can impact our thoughts, emotions, and actions. It investigates the connections we make with shades whether they stem from reactions (such, as the vibrancy of red) or cultural meanings we’ve learned over time (like the purity associated with white).

Having a grasp of color psychology fundamentals provides designers with a toolbox. They can intentionally select colors to establish the atmosphere of a website and evoke feelings such, as calmness or excitement. Even alter perceptions of space by utilizing light and dark shades.

- The Color Wheel: A quick recap of primary, secondary, and tertiary colors.

- Warm vs. Cool: Emotional associations of warm (red, orange, yellow) and cool (blue, green, purple) colors.

- Individual Color Meanings: Delve into the common symbolism of colors in Western culture (e.g., red – passion/danger, blue – trust, green – growth).

Crafting Harmonious Color Palettes: A Systematic Approach

Creating color palettes involves a mix of knowledge and gut feeling. Begin by grasping the color schemes; use colors (opposites, on the color wheel) for a lively look analogous colors (adjacent hues) for a cohesive feel, or triadic colors (three equally spaced hues) for a well-rounded vibe.

Online tools can be super helpful in crafting palettes and experimenting with combinations. However, it’s crucial not to overwhelm users. Aim for a blend of colors that work well together striking the balance of contrast, for readability and accessibility.

Think about your brand image and the message you want to convey through your website when choosing colors. A curated color palette forms the foundation of a coherent and captivating web design services.

Color plays a role, in web design going beyond just the way it looks. By considering the psychological impact of color choices designers can evoke emotions direct users around a website strengthen brand recognition and create a memorable user experience.

A thought-out color scheme should align with the purpose of the website – using soothing colors for a healthcare site and lively shades for a kid’s brand – helping websites stand out in an online world.

Utilizing colors effectively can elevate a website from being visually attractive to being an instrument that influences user behavior positively and leaves a lasting impact.

- Emotional Impact: Color directly taps into our emotions, influencing how users feel as they interact with your website. Warm colors excite, cool colors calm, and the right combinations evoke specific feelings associated with your brand.

- Guiding the User: Strategic color use draws attention, creates emphasis, and improves usability. Contrasting colors make buttons pop, helping guide users towards your desired actions.

- Branding and Messaging: Your color palette becomes a core part of your brand identity. Users develop immediate associations, whether that’s the calming trustworthiness of a doctor’s office website or the playful energy of an online toy store.

- Standing Out: In a sea of websites, a well-designed color scheme makes you memorable. It separates you from competitors and creates a lasting impression that resonates with your target audience.

Tech

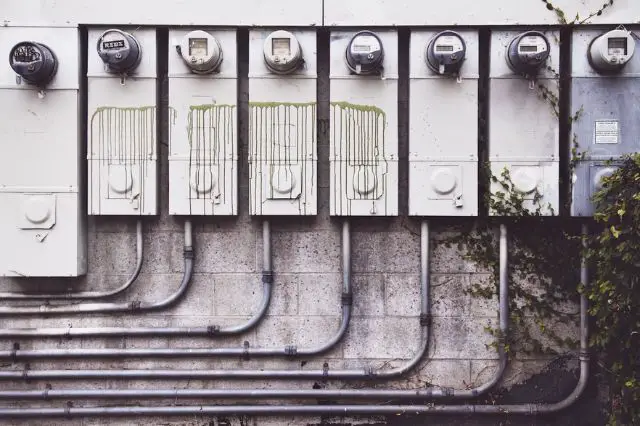

Taming the Current: Understanding and Preventing Electrical Overload

An electrical overload occurs when too much current is drawn through an electrical circuit, potentially leading to hazardous outcomes like fires or equipment damage. While both homes and businesses are at risk, industrial facilities with dense electrical infrastructure face some of the greatest overload hazards.

Think of electricity as water flowing through a hose. If you turn up the valve too high, the hose’s capacity will be exceeded, and you’ll either get leaks, pipe bursts, or damage from the excess pressure. Similar consequences happen when electrical current exceeds circuit capacities.

While momentary overloads may just trip a breaker, prolonged excessive current draws can lead to catastrophic equipment failures, fires, or other safety hazards. Industrial facilities are especially vulnerable due to their demands for dense electrical infrastructure.

Causes of Electrical Overloads

Several factors can contribute to electrical overloads:

- Circuit Overloading: This happens when too many devices are plugged into one circuit at a time. Even if those devices aren’t being actively used, their idle power draws accumulate to overload the circuit. Daisey-chained power strips liberally loaded with devices are a common overload culprit.

- Faulty Equipment: Malfunctioning appliances, machinery, or wiring along a circuit can also directly contribute to overloads. Examples are electrical shorts, corroded connections, old wiring unable to handle modern appliance loads, or devices drawing higher idle currents than normal. Damaged insulation and exposed wires also pose risks.

- Ground Faults: Also referred to as leakage current, ground faults occur when electricity strays or leaks from its intended path and instead flows into the grounding system. Although grounding systems are designed to handle some leakage, excessive ground faults can lead to overloads.

- Power Surges: From lightning strikes, damaged transformers, faulty generators, and similar causes, power surges slam electrical systems with sudden bursts of excess current. Without adequate protection, this abnormal influx readily overloads circuits.

- Simultaneous High Demand: At certain times, multiple pieces of equipment along a circuit could simultaneously start drawing higher loads, cumulatively exceeding that circuit’s capacity even if those devices typically don’t pose issues independently. Think of an industrial motor, air compressor, and conveyor belt system all powering up at once.

Consequences of Electrical Overloads

Electrical overloads create substantial safety risks and can cause extensive equipment damage. Here are some common consequences posed by electrical overloads:

Tripped Circuit Breakers

Circuit breakers are designed to trip and cut power as an early-response safety mechanism against sustained overloads. While the breaker trip protects downstream equipment, it also disrupts device functionality until the breaker is reset. Tripped breakers directly impact productivity in facilities that rely on constant electrical machinery uptime for operations.

Electrical Fires

Overheating wires and connections pose serious fire hazards, especially in industrial settings where flammable materials or dust accumulation could be present nearby. According to National Fire Protection Association estimates, electrical overloads account for around 30,000 fires per year just within industrial facilities.

Damaged Equipment

Beyond fire risks, sustained electrical overloads can simply fry electrical components involved in the overloaded circuit. Everything from motors, transformers, conductors, insulators, and more is at risk of heat degradation/mechanical stresses. The costs of replacing damaged electrical infrastructure can be substantial.

Power Outages

If overload conditions persist long enough before a protective breaker trips, the immediate equipment could sustain damage, causing a power outage further down the circuit. In drastic cases, the overload itself could theoretically trip the main breaker/fuse, cutting power to large sections of your facility. Loss of power triggers even more costly productivity impacts and safety risks for staff.

Preventing Electrical Overloads

Facility managers overseeing large electrical loads have several options available to prevent hazardous overloads proactively:

- Identify Circuit Capacity: Maintaining updated electrical drawings with load calculations for each circuit is invaluable. These help identify under-capacity circuits at risk of overload, especially when adding new equipment. Periodic infrared scans also help monitor heating issues along wires.

- Practice Smart Plugging: Encourage staff to be mindful of available outlets and avoid daisy-chaining surge protectors to prevent overloading circuits. Strategically distribute equipment across available circuits.

- Upgrade Outdated Wiring: If older electrical infrastructure lacks the capacity to support modern power demands, upgrades may be warranted to bring things up to code and safely add capacity margin.

- Invest in Surge Protectors: Commercial surge protectors help absorb anomalous power surges at key equipment or service panel locations, preventing overloads. They regulate voltage levels when abnormal spikes occur.

- Schedule Electrical Inspections: While building codes require routine inspections to uncover any overlooked risks like damaged wires or faulty equipment that could prompt overloads, even more frequent proactive inspections are worthwhile for aging electrical systems. Thermal imaging and breaker testing are example inspection focus areas.

- Unplug Unused Appliances: Remind staff to unplug seldom-used devices and equipment rather than leaving them plugged in indefinitely. All those idle current draws accumulate gradually, overloading circuits.

- Use the Right Size Fuses/Circuit Breakers: When replacing aged fuses or circuit breakers, ensure new components are properly rated for the intended equipment’s power demands with some extra capacity margin built in. Undersized components pose overload risks.

The Role of Metal-Clad Switchgear in Overload Protection

For handling large electrical current capacities across industrial facilities while guarding against overloads, metal-clad switchgears offer robust and safer power control solutions:

- High interrupting capacity: Designed to withstand short circuit currents up to 200kA, metal-clad switchgear can safely isolate and redirect excessive overload currents away from vulnerable equipment, better avoiding hazards.

- Operator safety: Unlike open busbar switchgear designs, metal-enclosed switchgear incorporates grounded metal barriers around current-carrying components, greatly reducing electric shock risks for staff during switchgear operation or maintenance. Doors with safety interlocks also prevent opening while energized.

- Modular construction: With individual cubicles for various switchgear functions like circuit breakers or instrument transformers, failed components are easier to isolate and replace without affecting adjacent equipment availability. This supports shorter downtimes. The modular designs also help simplify future expansion needs.

Proper metal-clad switchgears that provides a high level of protection at industrial sites safely and efficiently manages immense power flows. Protecting this vital equipment from excessive currents prevents costly outages and dangerous conditions. With a robust electrical backbone in place, operations can continue uninterrupted.

Conclusion

Electrical overloads pose substantial risks ranging from minor outages to fires and injuries. Targeted prevention is possible by understanding their causes – from circuit overloads to voltage imbalances.

Tactics like upgrading wiring, using surge protection, and ensuring properly sized breakers reduce overload likelihood. In industrial settings, investing in resilient metal-clad switchgears that provide a high level of protection manages extreme currents while insulating staff from harm.

With vigilance and safe infrastructure, electrical systems’ lifesaving protections will switch on the moment trouble arises.

-

Captions3 years ago

Captions3 years ago341 Sexy Captions to Fire Up Your Instagram Pictures

-

Captions3 years ago

Captions3 years ago311 Night Out Captions for Instagram and Your Crazy Night

-

Captions3 years ago

Captions3 years ago245 Saree Captions for Instagram to Boost Your Selfies in Saree

-

Captions3 years ago

Captions3 years ago256 Best Ethnic Wear Captions for Instagram on Traditional Dress

-

Captions3 years ago

Captions3 years ago230 Blurred Picture Captions for Instagram

-

Captions3 years ago

Captions3 years ago275 Deep Captions for Instagram to Express Your Thoughts

-

Quotes3 years ago

Quotes3 years ago222 Nail Captions for Instagram to Showcase Your Fresh Manicure

-

Captions3 years ago

Captions3 years ago211 Laughing Captions for Instagram | Laughter Is the Best Medicine